Social media has become a defining infrastructure of modern life, shaping how people learn, communicate, and participate in society. Yet the same systems that enable connection can also amplify harm. Misinformation spreads faster than corrections, harassment can silence vulnerable voices, and opaque algorithms influence public perception in ways few users fully understand. Enhancing safety and accountability on these platforms requires more than reactive fixes; it demands a deliberate reimagining of how digital spaces are governed and how people are empowered within them.

"The point of modern propaganda isn't only to misinform or push an agenda. It is to exhaust your critical thinking, to annihilate truth."

A foundational strategy is increasing transparency. Today, the mechanisms that determine what content is promoted, flagged, or removed operate largely behind closed doors. Opening these systems to independent auditing - by researchers, regulators, and civil society - would illuminate how information flows and where risks emerge. Transparency is not about exposing proprietary code; it's about acknowledging that when a platform becomes a central public forum, its decision-making processes become matters of public concern.

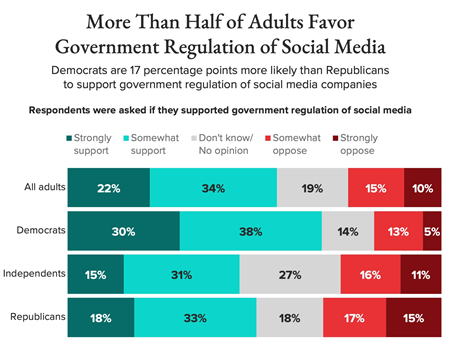

Alongside transparency, platforms need stronger, enforceable standards for responsible operation. Voluntary self-regulation has repeatedly fallen short, often responding to crises only after harm has already occurred. Establishing clear rules for content moderation, data use, and algorithmic design - paired with independent oversight bodies capable of investigating and enforcing those rules - creates a baseline of accountability. This approach mirrors how society governs other powerful industries: not to stifle innovation, but to ensure that public well-being is not an afterthought.

User empowerment is another essential pillar. People should have meaningful control over their online experience, including the ability to understand why content is recommended, adjust personalization settings, and opt out of targeted data practices. When users can shape their own digital environments, they become less vulnerable to manipulation and more capable of navigating online spaces safely. Empowerment shifts the balance from platforms dictating the experience to users actively participating in it.

Finally, strengthening digital literacy is critical for long-term resilience. Even the most transparent and well-regulated platforms cannot eliminate all risks. Teaching people how to evaluate sources, recognize coordinated manipulation, and understand how algorithms influence what they see equips society to respond thoughtfully rather than reactively. A digitally literate public is better positioned to demand responsible design and hold platforms to account.

Enhancing safety and accountability on social media is ultimately about aligning powerful technologies with the public good. It requires transparent systems, enforceable standards, empowered users, and a society capable of navigating complex information landscapes. When these strategies work together, they create a healthier digital ecosystem - one where people can participate freely without sacrificing their security, dignity, or trust in the world around them.

| • | Fake News |

| • | China's Credentialing Crackdown: A Reset For The Influencer Economy |

| • | Social media platforms not doing enough to stop harmful AI bots |